Overshadowed by the tragic passing of my co-author, Paul Zimmermann, I want to share the news that our joint article on “Questioning the ‘transparency-fix’ in the fight against corruption: The example of Transparency International” has been published in Critical Policy Studies. Please check out the abstract below:

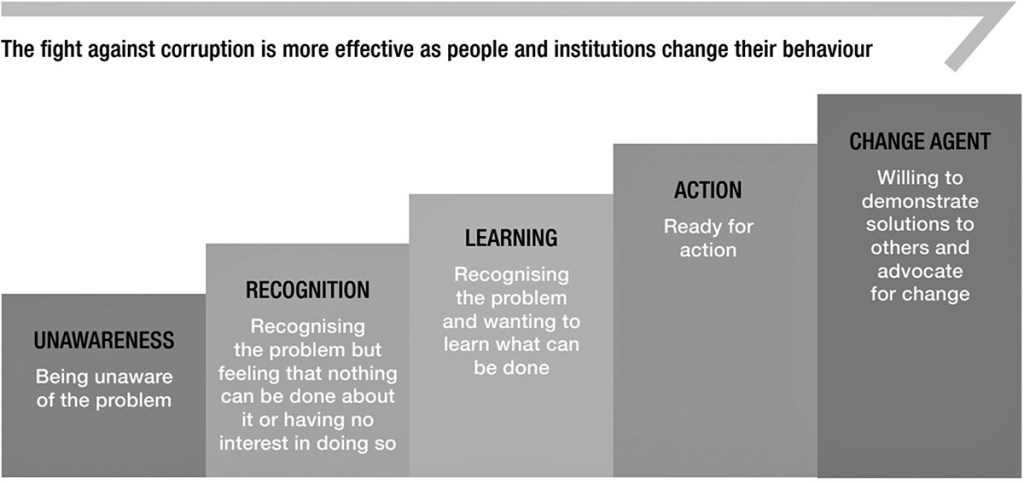

Increasingly, transparency is seen as a panacea in the fight against the ‘cancer of corruption’ and as a solution that fixes problems associated with all sorts of organizational misbehavior. In this paper, we turn the given into a question and study transparency not as a solution to the problem of corruption, but rather as a historically contingent form of problematization that links specific problem constructions with specific technologies for governing behavior. Drawing on the Foucauldian concepts of ‘problematization’ and ‘moral technologies,’ we analyze the NGO Transparency International as a critical case with strategic importance for the more general problem of disentangling the ‘transparency-power nexus’ and of understanding the politics of regulation in the name of transparency.

The article is available open access at the journal’s website.